|

1/17/2024 0 Comments Link extractor scrapy

The CrawlSpider contains the attribute rules and the same properties as a standard Spider.The received value may be changed and returned. Process_value: It’s a function that gets a value from tags and attributes that have been scanned.Unique: This parameter is extracted when the links are repeated.

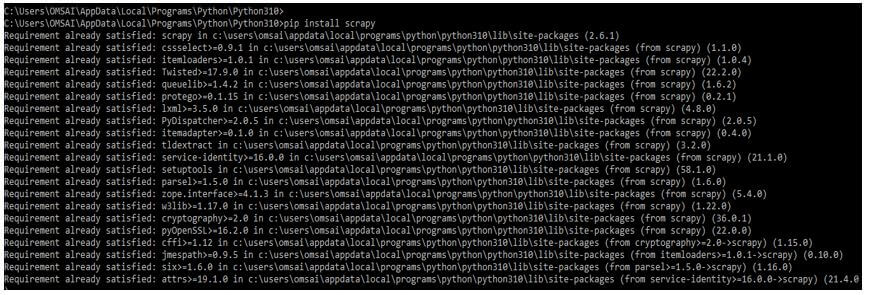

Canonicalize: url is used to convert the retrieved url to a standard format.Attrs: A single attribute or a set of attr is taken when extracting links.Tags: When extracting links, a tag or set should be considered.Restrict_css: It works the same way as the restrict xpaths argument, which extracts links from CSS-selected sections within the response.The links will only be pulled from XPath’s text if this option is selected. Restrict_xpath: It’s an XPath where the response’s links will be extracted.Deny_extensions: It blocks an extension corresponding to the domains from which the connections are to be pulled.Deny_domain: It blocks a single string or a list corresponding to the domains from which the connections are to be pulled.Allow_domain: It accepts a single string or a list corresponding to the domains from which the connections are to be pulled.It will not delete the unwanted links if it is not indicated or left empty. Deny: It excludes or blocks a single or extracted set of expressions.Allow: It allows us to use the expression or a set of expressions to match the URL we want to extract.After installing the scrapy in this step, we log into the python shell using the python command. In the below example, we have already installed a scrapy package in our system, so it will show that the requirement is already satisfied, so we do not need to do anything.Ģ. In this step, we install the scrapy using the pip command. We will obtain any nested URLs because we set it to True.īelow step shows how to build a scrapy LinkExtractor as follows:ġ. Follow indicates if each response’s links should be followed.The CrawlSpiderclass employs link extractors, which are rules with the sole aim of extracting links.We can only use the link extractors once, but we can run the extract links method multiple times to get links with varying responses. Scrapy link extractor contains a public method called extract links, which takes a Response object as an argument and returns a list of scrapy.link.Implementing a basic interface allows us to create our link extractor to meet our needs. Scrapy includes extractor’s built-in, such as scrapy.

A web server returns response objects in response to a request.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed